|

If we are using a Markov Chain of that found in the Exploration and This ratio might not be fully simplified if you plug in the probabilities, but this is as simplified as we can get algebraically. The probability that we are moving to a 1 is c0/2+s1/2.īecause of this, the ratio of a 0 to 1 after infinite trials is the ratio of the probability that we are moving to a 0 to the probability that we are moving to a 1 or The probability that we are moving to a 0 is s0/2+c1/2. The starting probability will be irrelevant after infinite trials of the probability of 0 being the probability that we are moving to a 0. Now, the probability we will get a 0 is the probability that we are moving to a 0. Therefore, we need to half the probabilities of everything if we are not told if we are at 0 or 1. The probability that we start at state 0 is 1/2 and the probability that we start at state 1 is 1/2. Probability of 1->0 is c1 (change from 1) Probability of 0->1 is c0 (change from 0) Let's say you've set the Markov Chain to have the following probabilities. Let's say we have a Markov chain like the one seen in the Markov Chain Exploration. Is the summary (at the end) of this question correct? We can substitute s0+c1 in for a and s1+c0 in for b.

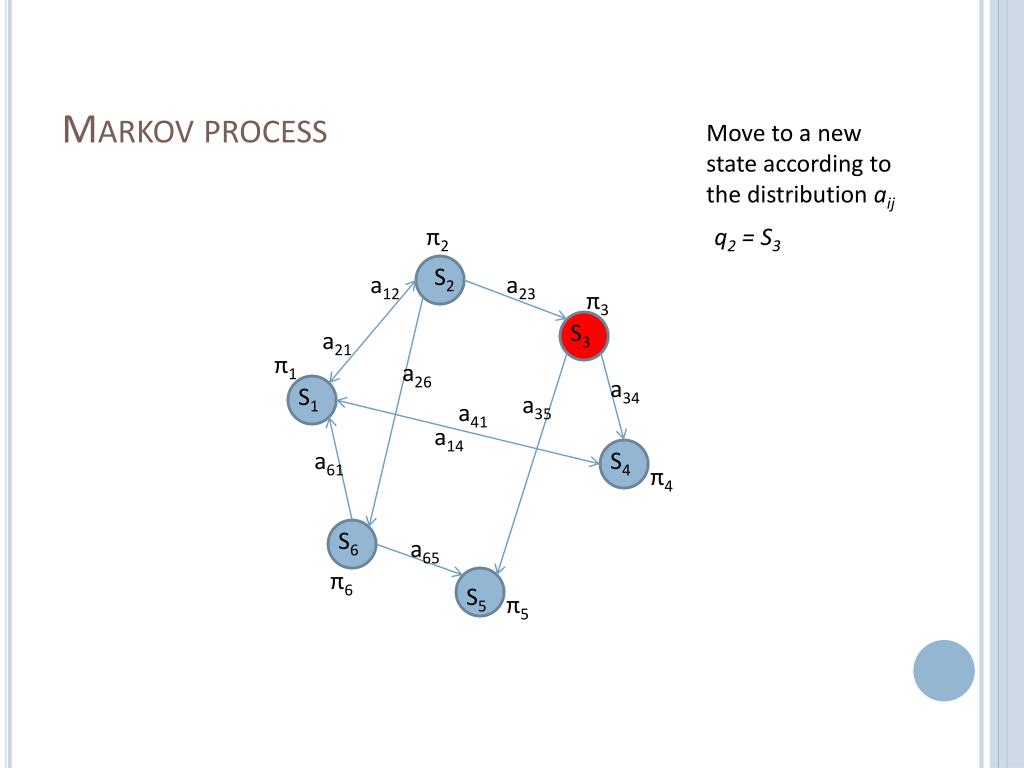

The probability of having a 0 and the probability of having a 1 if the ratio of 0s to 1s is a:b is a/(a+b) and b/(a+b), respectively. The probability of having a 0 is 3/5 and the probability of having a 1 is 2/5. This means for every 5 outcomes, there are 3 0s and 2 1s. Let's say that the ratio of 0s to 1s is 3:2. The probability of changing from 0 to 1->c0īy knowing the ratio of 0s to 1s, we can find the probability of having a 0 and the probability of having a 1. The probability of staying at 1 if you're at 1->s1 The probability of changing from 1 to 0->c1 The probability of staying at 0 if you're at 0->s0 Look at the Markov chain in the exploration. Yes, I've done this and Brit Cruise said that it was right. I suspect a cellular automata is a more general system than a Markov chain in that each state has multiple inputs (but then it is less general in that all the probabilities are generally equal to 1). If you created a grid purely of Markov chains as you suggest, then each point in the cellular automata would be independent of each other point, and all the interesting emergent behaviours of cellular automata come from the fact that the states of the cells are dependent on one another. You could create a state for each possible universe of states (so if you had a 3x3 grid and each cell could be on or off, you'd have 2^9 = 512 states) and then create a Markov to represent the entire universe, but I'm not sure how useful that would be. You could address the first point by creating a stochastic cellular automata (I'm sure they must exist), or by setting all the probabilities to 1. Generally cellular automata are deterministic and the state of each cell depends on the state of multiple cells in the previous state, whereas Markov chains are stochastic and each the state only depends on a single previous state (which is why it's a chain). Simulation of random walk, Markov chain and Markov jump processes with both time homogeneous and inhomogeneous examples.Interesting idea. Maximum likelihood estimators for the probability of death. Binomial and Poisson models of mortality. Use of maximum likelihood for estimating transition intensities. Time-inhomogeneous processes with generalisation of earlier examples (sickness, death, marriage models). Poisson process, inter-event times, Kolmogorov equations.

Simple examples of time-inhomogeneous Markov chains. Estimation of probabilities, simulation and assessing goodness-of-fit. Application of Markov chain models, eg no-claims discount, sickness, marriage. General theory of Markov chains: transition matrix, Chapman-Kolmogorov equations, classification of states, stationary distribution, convergence to equilibrium. Random walks, transition probabilities, first passage time, recurrence, absorbing and reflecting barriers, gambler's ruin problem. Definitions of stochastic processes, state space and time, mixed processes, the Markov property. Difference between deterministic and stochastic models.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed